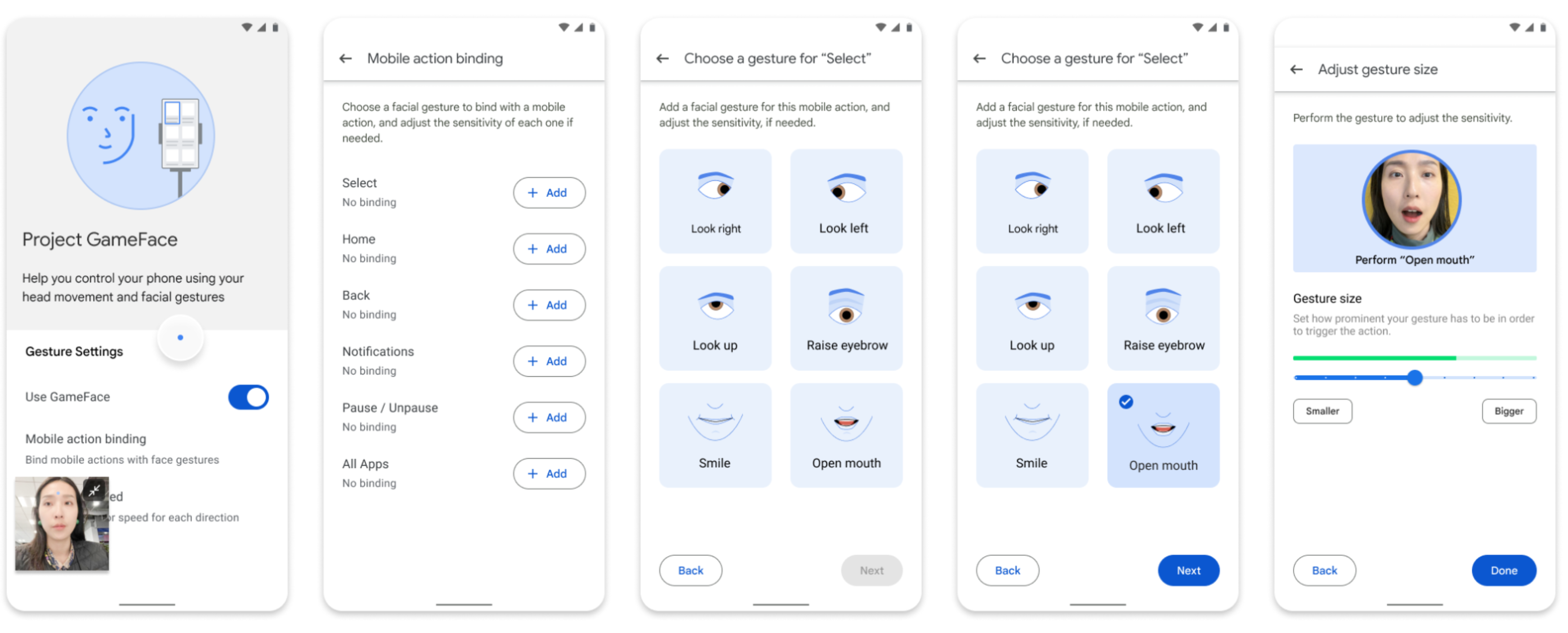

Google announced on Tuesday that the code for Project Gameface, its hands-free gaming “mouse” controlled by facial expressions, is now open-source for Android developers. This allows developers to integrate this accessibility feature into their apps. Users can then control the cursor with facial gestures or head movements, such as opening their mouth to move the cursor or raising eyebrows to click and drag.

Project Gameface utilizes the device camera and a facial expressions database

Project Gameface, originally announced for desktops at last year’s Google I/O, uses the device’s camera and a database of facial expressions from MediaPipe’s Face Landmarks Detection API to manipulate the cursor.

“Through the device’s camera, it seamlessly tracks facial expressions and head movements, translating them into intuitive and personalized control,” explained Google in its announcement. “Developers can now build applications where their users can configure their experience by customizing facial expressions, gesture sizes, cursor speed, and more.”

While initially designed for gamers, Google has partnered with Incluzza, a social enterprise focused on accessibility in India, to explore expanding Gameface to other settings like work, school, and social situations.

The inspiration for Project Gameface came from quadriplegic video game streamer Lance Carr, who has muscular dystrophy. Carr collaborated with Google on the project with the goal of creating a more affordable and accessible alternative to expensive head-tracking systems.

RELATED:

- Dangbei Mars Pro 2 4K laser projector pre-installed with Google TV and Netflix apps unveiled

- Google Announces the 6th Generation of its Tensor Processing Unit with 4.7 Times More Computing Power

- Get $100 OFF on Xiaomi 14 Pro at Giztop (1TB Variant)

- Lenovo Legion Y700 2024: Latest Gaming Tablet with Enhanced Display now available at Giztop

- HyperOS vs MIUI: 10 Things You Must Know

(Via)

Comments